A few ways to think about new scales in journalism studies

Or, dashing out some ideas for future failure in my methods class

Ah yes, this summer’s fun and joy is a three week, 4.5 hour a day class in Measurement, Scaling, and Dimensional Analysis through Michigan’s ICPSR’s famous methods summer camp. Except I’m not in Michigan (clearly) and so I’ve been tuning in via zoom, and am catching up right now asynchronously. Being able to take this class is an achievement in itself - to follow along, it’s been helpful to have my decent background in Matrix Algebra and I definitely needed to have familiarity with OLS regression, using R code, not to mention reading proofs, following equations, and all of these skills that I simply didn’t have at this time last year.

So what might one do with such a class? The reason I’m taking it is that eventually, I’d like to develop my own index to figure out some systematic way to bring together the qualitative observations about variables that I think matter for predicting various outcomes for news and information availability and takeup at the community-level. But I realize there’s more that can be done to uncover latent, broader, and semi-measurable cultural indicators - if we can use these measurement techniques to come up with measures for ideology, than surely I can jerry-rig some scales in order to measure some of the latent variables that are not actually specifically asked about in any meaningful way in survey research.

So, a few major (MAJOR) assumptions have to be made before using a lot of these mini-approaches so far: the data has to have some sort of normality; you have to buy that via the central limit theorem, more predictors give you better reliability than a single predictor, and that, fundamentally, a set of specific questions can be mushed together in a theoretically coherent way so as to stand in for some unobserved variable you think lurks beneath. That said, here’s what I’ve been thinking about measuring:

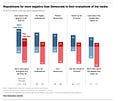

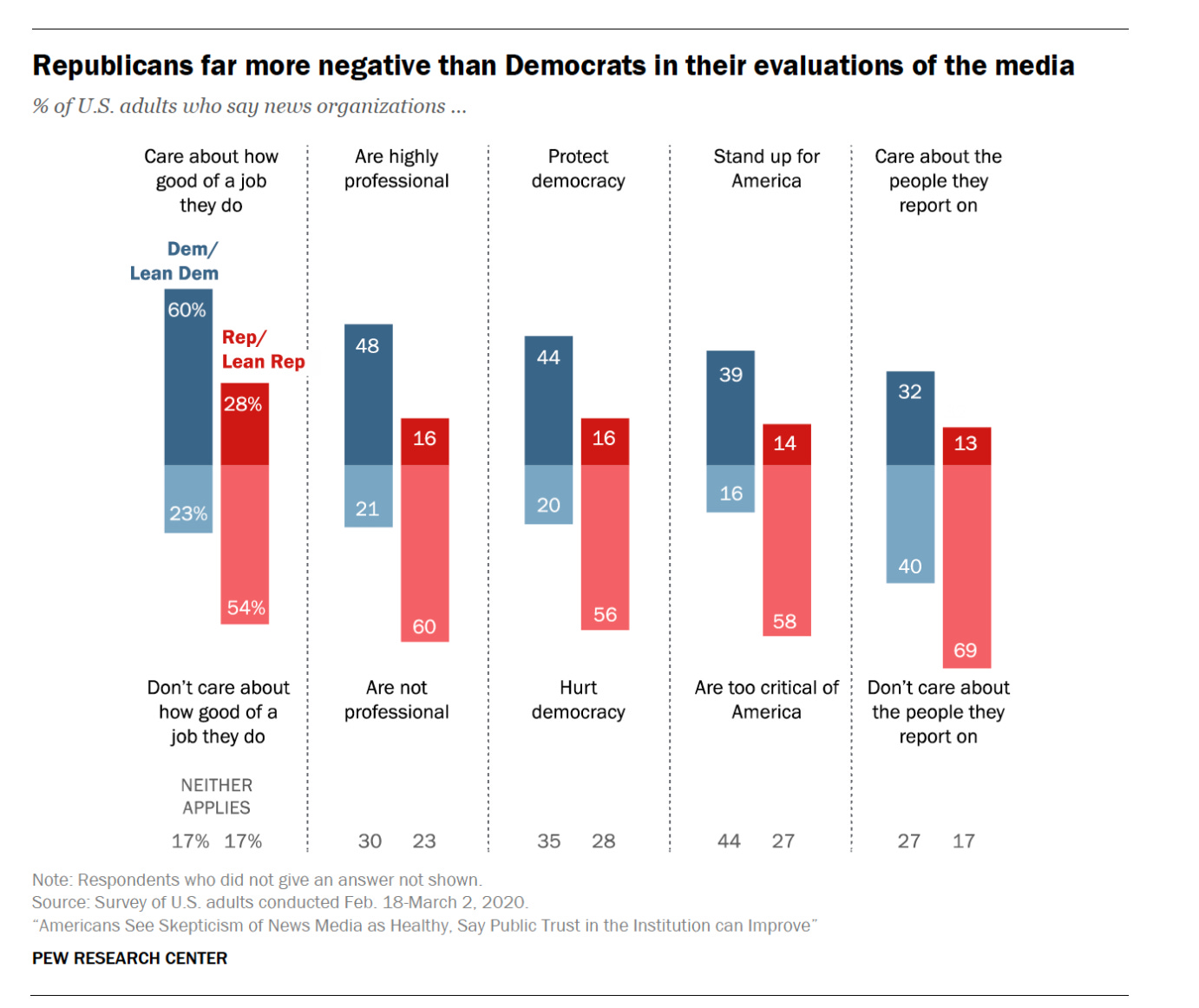

Perceptions of Media Bias: oh man, there are so many ways to measure trust and trust in media, including scale development papers. There are also many ways that Pew and other surveys ask about media bias - including at the out-party level for various news brands. But we don’t have a great measure that can help predict “how biased does this person think media is” - and thankfully, Pew data has a lot of over-time data that can be used to validate the scales, assuming (nope best study is one, from 2020)- that the measurement itself hasn’t changed and still works to measure what we think it does over time.

The point is - there is some latent, bigger conceptual variable that is related/lies underneath these more specific measures - maybe even one or two - and perceptions of media bias give us a more conceptually refined way of thinking about how Americans view news versus a more general relational and ambiguous “trust” in news media, which can take into account feelings about the importance of skepticism, faith in institutions overall, etc.

Corruption avoidance/Doing the right thing: One of the big issues in thinking about how news might help to create a more accountable government or mitigate against public corruption is that we only know corruption when (politicians) get caught. It’s a problem I write about in this paper here. We don’t know about all the corruption that didn’t happen as a result of politicians having the sense that maybe they are going to get nabbed for wrongdoing, most likely via a federal enforcement agency or thanks to the news media publicizing evidence of malfeasance. Places that are less corrupt seem to have more news media, over all, in terms of circulation, but I’m curious about the flipside - how might we measure the impact of simply having media present on the corruption that doesn’t occur as a result?

I’m extremely interested in the efficacy of journalism to help inspire good government and good business practices (increasingly, we see that the two are deeply intertwined - more corrupt governments/pols are likely to be doing favors for the businesses that fund their campaign coffers - or just their personal wealth). But as I thought about places that have limited press freedom and autocratic regimes (thanks to my recent East-West Center conference), it’s much harder to measure the actual underlying corruption in these places that does/does not occur because of a present watchdog. To this end, we might have to assume that the greater the state attacks on the press, the more effective a government might perceive the news media to be in catching corruption in action. So actually, greater incidences of press crackdowns might speak to a more latent institutional “fear of being found out for being corrupt” - which is a related but somewhat different way of getting to the question of just how much journalism might be helpful in creating the conditions for healthy democracy/facilitating healthy democracy.

This obsession with corruption is related to another individual level measure that has some sort of next level version - pessimism/cynicism about American democracy — this is related to the extent to which Americans perceive our media as corrupt, the government as broken, and see each other as antagonists to some sort of greater American project. As I move toward some sort of American exceptionalism project, I think that this question of what we expect of the US and how it falls short needs some specific teasing out - and I will need to spend more time thinking about how various opinion variables can speak to this - and how to put media into this conversation, as it is often a secondary concern in a lot of this overall attitudes surveys that political scientists do.

Likelihood of Media Financial Success: We are throwing spaghetti at the wall when it comes to the myriad ways in which we think various elements of “news revenue models” contribute to the sustainability of a news organization. The “likelihood” suggests a Maximum Likelihood Estimation approach - as we’re thinking about the values of our variables that are most likely to maximize “financial success” - (which we would define in terms of a probability). BUT, how are we going to figure out what elements go into this MLE model? This is I think where we might look to building some sort of scale or developing a factor analysis approach that can then be used as part of then conceptualizing the larger MLE model, which might be a more parsimonious model that takes the “factors” and uses them in some overall “prediction” equation/model.

News and Information Capacities of Communities: This seems to me to be some sort of larger index of variables you’d add up together to get some score so you could measure one place compared to another, and some people seem to think they’ve accomplished this already. But to me, there are many elements that go beyond the income/education/politics of a place and the availability of professional news media that probably need to be taken into account, including elements of the built environment like the presence of various community spaces as well as the larger history of the community as a whole (is this a place down on its luck?) - and so I’ve got a lot more thinking to do on what this measure might look like.

So, thinking through some of these approaches in terms of the media bias question:

Summative rating scales:

You could think about a media bias measure through a summative rating scale, basically mushing a series of related questions together — ((I’ve learned that cronbach’s alpha is not a measurement of internal consistency, but rather than reliability of the scale over all (which we can gauge via a score of each specific question relative to the others). I think.))

Here are some questions that could be used to come up with some sort of perceptions of media bias scale - unfortunately, the data itself comes from 2020, which now seems ancient.

Measured as none at all, not too much, a fair amount, a great deal,

% of US adults who say they have _ confidence that journalists act in the best interests of the public

% of Americans who say it is better if the public is skeptical rather than trusting of news media

% of Americans who say it is possible to improve the level of confidence in the news media (might need to remember this is almost reverse coded) - its valence is + vs -

And then, basically, all of these measures:

Now, the questions below get to maybe something different - perceptions of accuracy in the news media, which is perhaps related to a trust measure, but maybe not a media bias measure?

Thinking about the news media overall, what is the most negative thing they do, if anything? (question from 2012)

Would a scale that predicted something more directly based in affect (How much Americans dislike news media/news dislike scale) - get at a similar or different concept? Not sure. But that is where factor analysis comes in, too.

Factor Analysis

So, that is, if we think that there is some underlying “factor” that can explain the way that these questions “hang” together - and so we’re going backwards to use this larger set of questions to help us assess the presence of other possible underlying, latent variables that are a sort of “next” level, more reduced version of these more specific variables. As per the UCLA page:

The basic assumption of factor analysis is that for a collection of observed variables there are a set of underlying variables called factors (smaller than the observed variables), that can explain the interrelationships among those variables.

But but but, we’ve got to make an assumption that the questions might have some shared variance among them, and then some level of unique variance on their own due to both error and just the general “stochastic” part of the variable if you want to think about it this way, otherwise you’re doing another method, Principle Component Analysis, which presumes that the total variance is shared - that all different parts have the same total variance taken together.

What I like (I think) about factor analysis is that

a) you can work backwards but have some sense of precision as to whether various elements of the questions you’re thinking about as mattering actually matter, at a question per question level

b) to some degree, if you assume the relationship exists and there is correlation across the underlying observed variables, the inferential part of “statistical significance” doesn’t really matter - it’s not part of how we think about this model

c) there is a model that exists in the world that does, in fact, help us understand what contributes to some overall feeling/perception/value (to me, it’s a reverse use of factor in the writerly/english sense: the factors that lead to a particular, larger end value)

d) causality matters less - it doesn’t really matter whether the various observed variables “cause” the feeling/perception, what matters is that inherently, there is an association between the various observed variables and the other variables, and thus, to some larger bigger variable. But which causes which matters less because they are a sum/components of this larger, latent value/variable.

OK kids, I think now that it’s 5 am, I may have finally burned my brain out (and gotten my jetlag out) for the evening so I can go sleep for three hours now. It is so bothersome that I’ve finally got hyper synapse firing/massive intellectual inspiration/feel hungry for doing a lot of work again - being burned out was much better for sleep////